在本课程的学习过程中,您已了解数字无障碍功能的个人、企业和法律方面,以及数字无障碍功能合规性的基础知识。您已探索与包容性设计和编码相关的具体主题,包括何时使用 ARIA 而非 HTML、如何衡量颜色对比度、何时必须使用 JavaScript 等。

在剩余的模块中,我们将从设计和构建转为测试无障碍功能。我们分享了三步测试流程,其中包括自动化、手动和辅助技术测试工具和技术。我们将在这些测试模块中使用相同的演示,将网页从不可访问转变为可访问。

每项测试(自动化测试、手动测试和辅助技术测试)对于打造尽可能易于访问的产品至关重要。我们的测试依据的是《Web 内容无障碍指南》(WCAG) 2.1 合规性 A 级和 AA 级标准。

请注意,您所在的行业、产品类型、当地和国家/地区的法律和政策,或整体无障碍目标都会决定您应遵循哪些准则和达到哪些级别。如果您的项目不需要遵循特定标准,建议遵循最新版 WCAG。如需了解有关无障碍功能审核、合规性类型/级别、WCAG 和 POUR 的一般信息,请参阅如何衡量数字无障碍功能?

现在,您已经了解到,在为残障人士提供支持方面,无障碍功能合规性并非全部。不过,它是一个不错的起点,因为它提供了一个可供测试的指标。除了合规性测试之外,我们还建议您采取以下措施,以帮助您打造更具包容性的应用和服务:

- 与残障人士一起开展易用性测试。

- 聘请残障人士加入您的团队。

- 咨询拥有数字无障碍专业知识的个人或公司。

自动化测试基础知识

自动化无障碍功能测试使用软件根据预定义的无障碍功能合规性标准扫描您的数字产品,以查找无障碍功能问题。

自动化无障碍功能测试的优势:

- 在产品生命周期的不同阶段快速重复测试。

- 只需完成几个步骤即可运行,并且结果非常快速。

- 您只需具备一些无障碍功能方面的知识,即可运行测试或了解测试结果。

自动化无障碍功能测试的缺点:

- 自动化工具无法捕获产品中的所有无障碍功能错误

- 报告了假正例(报告的问题并非真正的 WCAG 违规问题)

- 可能需要针对不同的产品类型和角色使用多种工具

自动化测试是检查网站或应用无障碍功能的绝佳第一步,但并非所有检查都可以自动化。我们将在手动无障碍功能测试模块中详细介绍如何检查无法自动化测试的元素的无障碍功能。

自动化工具的类型

1996 年,应用特殊技术中心 (CAST) 开发了第一个在线自动化无障碍测试工具,名为 Bobby Report。目前,有 100 多种自动化测试工具可供选择!

自动化工具实现方式多种多样,包括无障碍浏览器扩展程序、代码 lint 工具、桌面和移动应用、在线信息中心,甚至您可以使用开源 API 构建自己的自动化工具。

您决定使用哪种自动化工具可能取决于多种因素,包括:

- 您要针对哪些合规性标准和级别进行测试?这可能包括 WCAG 2.2、WCAG 2.1、美国第 508 条或经过修改的无障碍规则列表。

- 您要测试哪种类型的数字产品?这可以是网站、Web 应用、原生移动应用、PDF、自助服务终端或其他产品。

- 您是在软件开发生命周期的哪个阶段测试产品?

- 设置和使用该工具需要多长时间?是个人、团队还是公司?

- 谁将进行测试:设计师、开发者、质量检查人员还是其他人员?

- 您希望多久检查一次无障碍功能?报告中应包含哪些详细信息?问题是否应直接与服务工单系统相关联?

- 哪些工具最适合您的环境?为您的团队?

还有许多其他因素需要考虑。如需详细了解如何为您和您的团队选择最合适的工具,请参阅 WAI 的“选择 Web 无障碍评估工具”一文。

演示:自动化测试

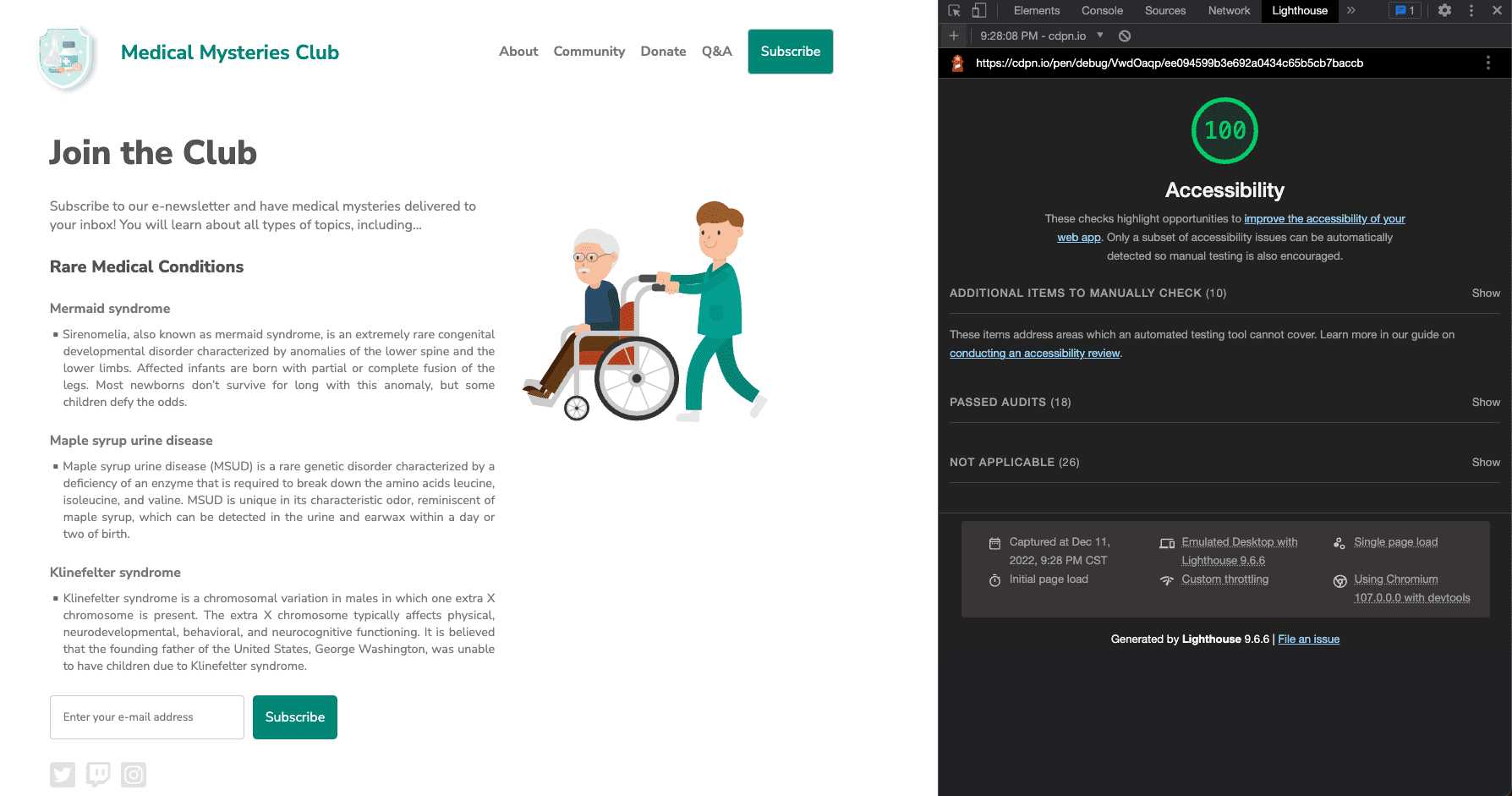

在自动化无障碍测试演示中,我们将使用 Chrome 的 Lighthouse。Lighthouse 是一款自动化的开源工具,旨在通过不同类型的审核(例如性能、SEO 和无障碍)来提升网页的质量。

我们的演示网站是为虚构的组织“医学之谜俱乐部”打造的。此网站在演示中是故意设为无法访问的。您可能会发现其中一些无障碍功能问题,我们的自动化测试也会发现其中一些(但不是全部)问题。

第 1 步

使用 Chrome 浏览器安装 Lighthouse 扩展程序。

您可以通过多种方式将 Lighthouse 集成到测试工作流中。我们将在本次演示中使用 Chrome 扩展程序。

第 2 步

我们在 CodePen 中构建了一个演示。在调试模式下查看该报告,以便继续进行后续测试。这一点很重要,因为它会移除围绕演示版网页的 <iframe>,该标记可能会干扰某些测试工具。

详细了解 CodePen 的调试模式。

第 3 步

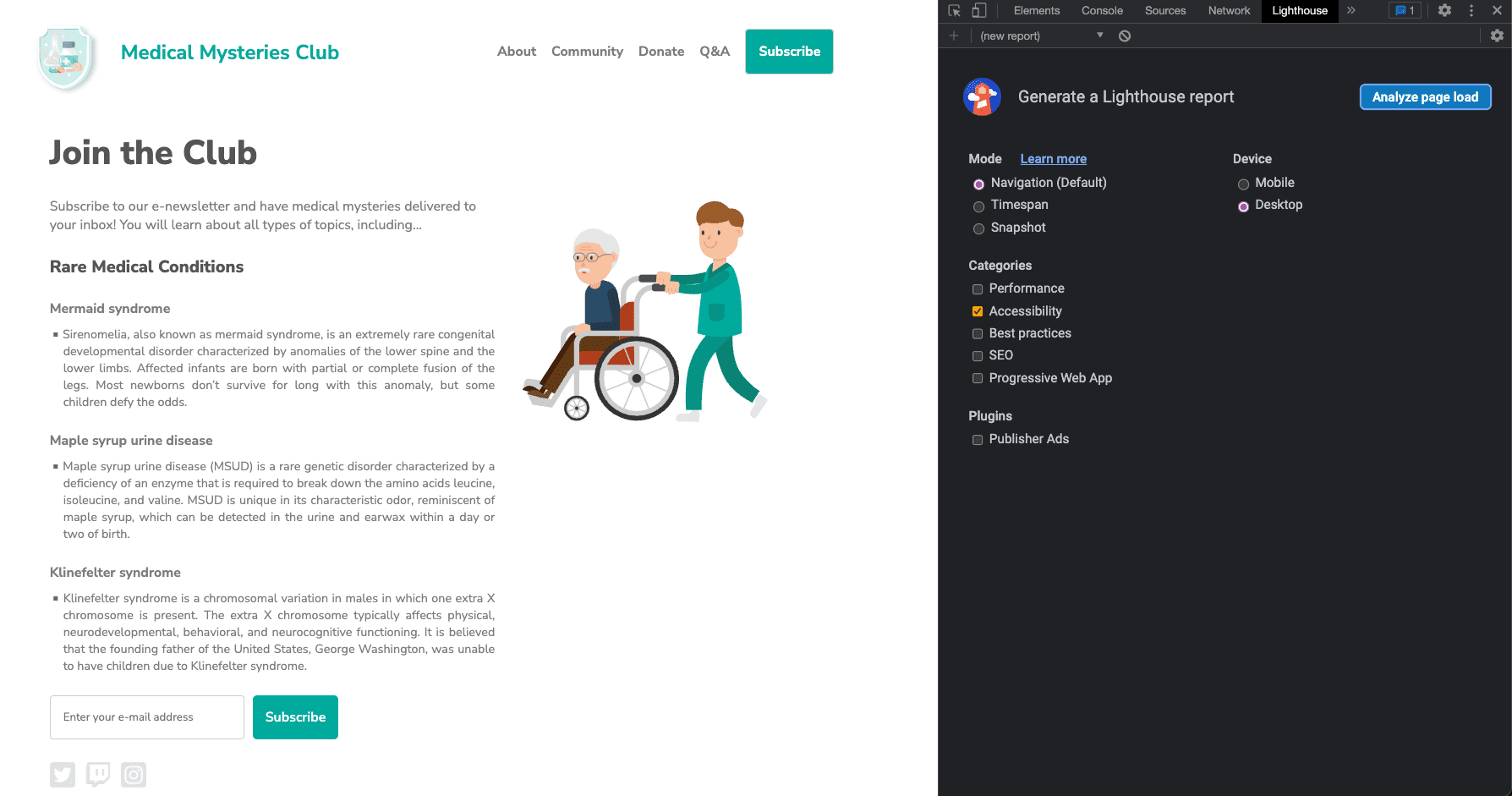

打开 Chrome 开发者工具,然后前往 Lighthouse 标签页。清除除“无障碍功能”之外的所有类别选项。将模式保持为默认模式,然后选择要用于运行测试的设备类型。

第 4 步

点击分析网页加载情况,并给 Lighthouse 一些时间来运行测试。

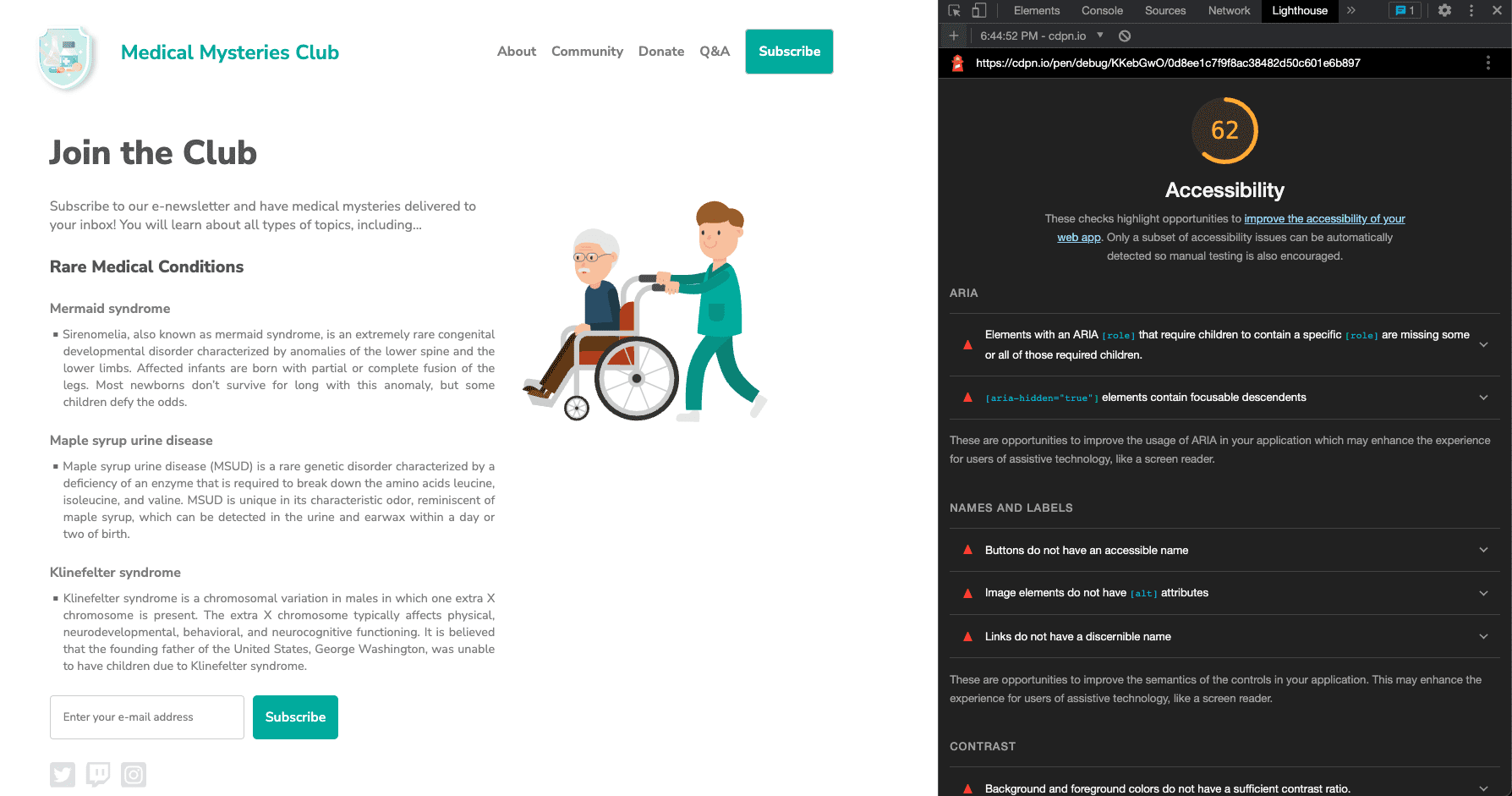

测试完成后,Lighthouse 会显示一个得分,用于衡量您所测试产品的可访问性。Lighthouse 得分的计算依据包括问题数量、问题类型以及检测到的问题对用户的影响。

除了得分之外,Lighthouse 报告还包含有关检测到的问题的详细信息,以及指向资源的链接,以便您详细了解如何解决这些问题。该报告还包含已通过或不适用的测试,以及需要手动检查的其他项的列表。

第 5 步

现在,我们来看看自动发现的每项无障碍功能问题的示例,并修正相关的样式和标记。

问题 1:ARIA 角色

第一个问题的说明如下:“具有 ARIA [role] 且要求子元素必须包含特定 [role] 的元素缺少部分或全部必需子元素。一些 ARIA 父角色必须包含特定子角色,才能执行它们的预期无障碍功能。”详细了解 ARIA 角色规则。

在我们的演示中,简报订阅按钮失败:

<button role="list" type="submit" tabindex="1">Subscribe</button>

让我们来解决这个问题。

让我们来解决这个问题。

输入字段旁边的“订阅”按钮应用了错误的 ARIA 角色。在这种情况下,可以完全移除该角色。

<button type="submit" tabindex="1">Subscribe</button>

问题 2:ARIA 隐藏

"[aria-hidden="true"] 元素包含可聚焦的下级元素。有一个 [aria-hidden="true"] 元素包含可聚焦的下级元素,导致这些交互式元素都无法被辅助技术(如屏幕阅读器)用户使用。详细了解 aria-hidden 规则。

<input type="email" placeholder="Enter your e-mail address" aria-hidden="true" tabindex="-1" required>

让我们来解决这个问题。

让我们来解决这个问题。

输入字段应用了 aria-hidden="true" 属性。添加此属性会使元素(以及其下嵌的所有内容)对辅助技术隐藏。

<input type="email" placeholder="Enter your e-mail address" tabindex="-1" required>

在这种情况下,您应从输入框中移除此属性,以允许使用辅助技术的用户访问表单字段并在其中输入信息。

问题 3:按钮名称

按钮缺少可供访问的名称。当某个按钮没有无障碍名称时,屏幕阅读器会将它读为“按钮”,这会导致依赖屏幕阅读器的用户无法使用它。

<button role="list" type="submit" tabindex="1">Subscribe</button>

让我们来解决这个问题。

让我们来解决这个问题。

从问题 1 中的按钮元素中移除不准确的 ARIA 角色后,“订阅”一词便会成为可访问的按钮名称。此功能内置于语义 HTML 按钮元素中。对于更复杂的情况,还可以考虑其他模式选项。

<button type="submit" tabindex="1">Subscribe</button>

问题 4:图片 alt 属性

图片元素缺少 [alt] 属性。说明性元素应力求使用简短的描述性替代文字。未指定 alt 属性的装饰性元素可被忽略。详细了解图片替代文字规则。

<a href="index.html">

<img src="https://upload.wikimedia.org/wikipedia/commons/….png">

</a>

让我们来解决这个问题。

让我们来解决这个问题。

由于徽标图片也是一个链接,因此您从图片模块中了解到,它被称为可执行图片,并且需要包含有关图片用途的替代文本信息。通常,网页上的第一个图片是徽标,因此您可以合理地假定 AT 用户会知道这一点,并且您可以决定不向图片说明中添加此额外的背景信息。

<a href="index.html">

<img src="https://upload.wikimedia.org/wikipedia/commons/….png"

alt="Go to the home page.">

</a>

问题 5:链接文字

链接缺少可识别的名称。请确保链接文字(以及用作链接的图片替代文字)可识别、独一无二且可聚焦,这样做会提升屏幕阅读器用户的导航体验。详细了解链接文字规则。

<a href="#!"><svg><path>...</path></svg></a>

让我们来解决这个问题。

让我们来解决这个问题。

网页上的所有可执行操作的图片都必须包含有关链接会将用户定向到何处的信息。解决此问题的方法之一是,为图片添加与用途相关的替代文本,就像您在示例中的徽标图片中所做的那样。这对于使用 <img> 标记的图片非常有用,但 <svg> 标记无法使用此方法。

对于使用 <svg> 标记的社交媒体图标,您可以使用定位到 SVG 的其他替代说明模式,在 <a> 和 <svg> 标记之间添加信息,然后将其从视觉上隐藏起来,添加受支持的 ARIA 或其他选项。根据您的环境和代码限制,一种方法可能优于另一种方法。

使用最简单且覆盖辅助技术最多的模式选项,即向 <svg> 标记添加 role="img" 并添加 <title> 元素。

<a href="#!">

<svg role="img">

<title>Connect on our Twitter page.</title>

<path>...</path>

</svg>

</a>

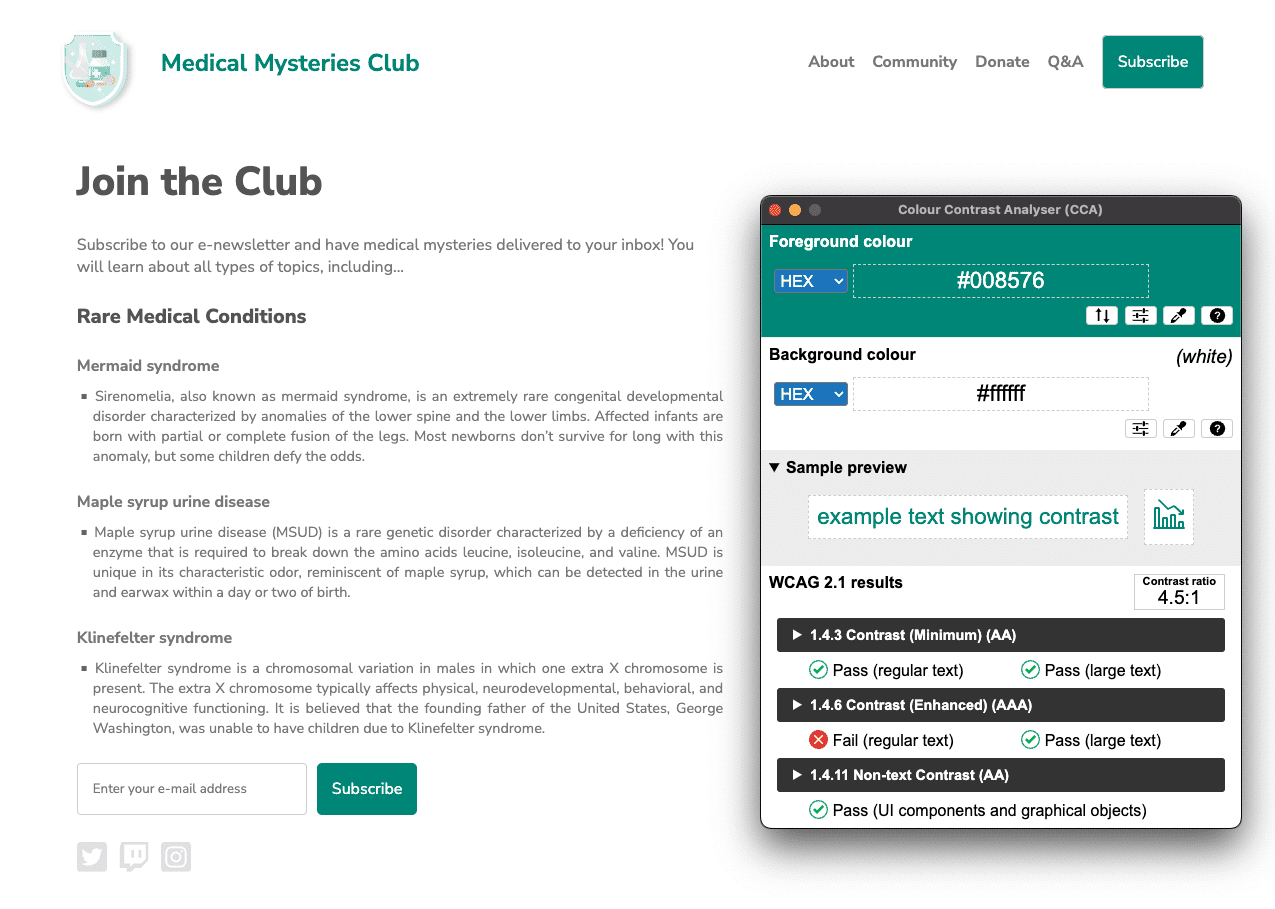

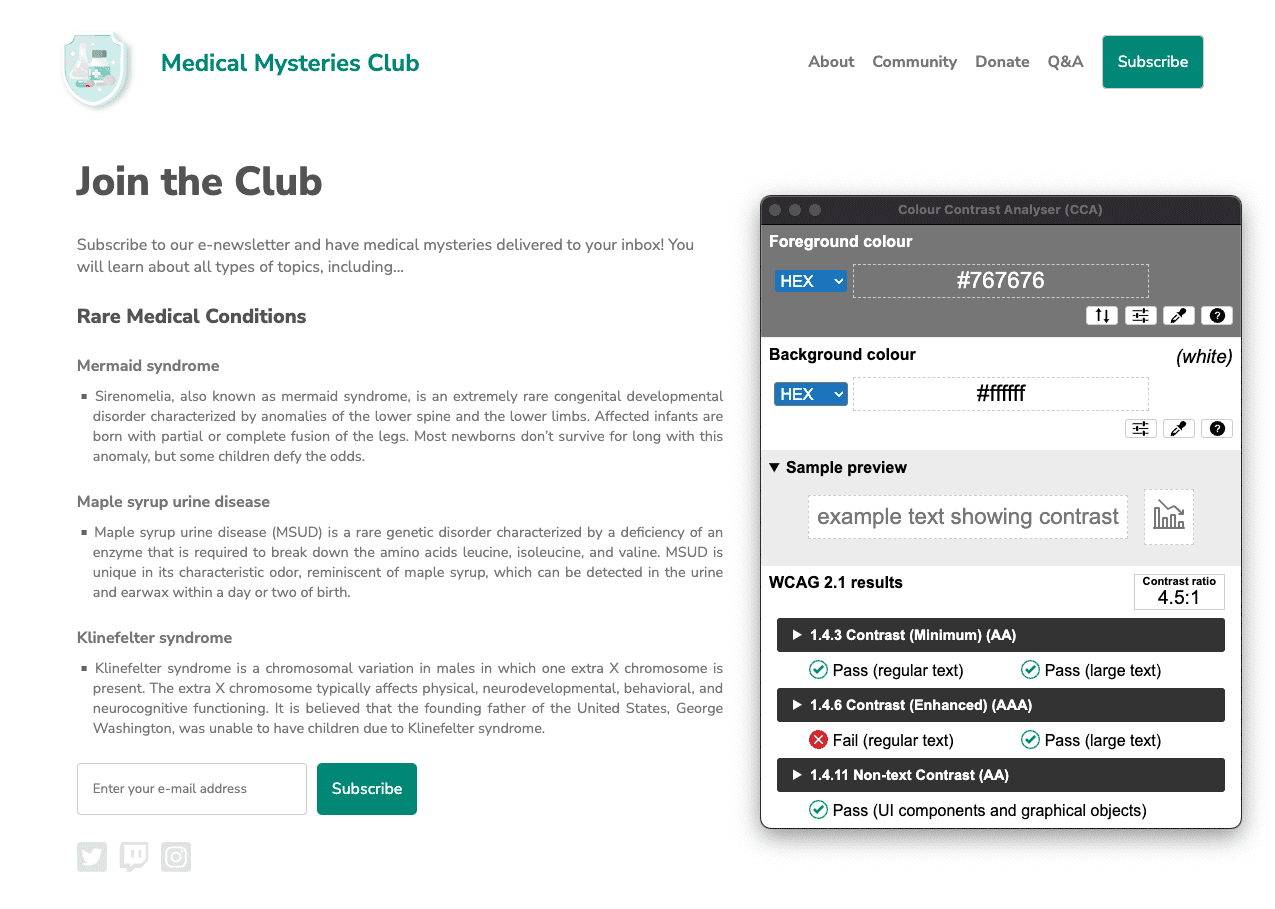

问题 6:颜色对比度

背景色和前景色没有足够高的对比度。对许多用户而言,对比度低的文本都是难以阅读或无法阅读的。 详细了解色彩对比度规则。

我们举报了两个示例。

#01aa9d,背景十六进制值为 #ffffff。

颜色对比度为 2.9:1。

#7c7c7c,而背景的十六进制颜色为 #ffffff。颜色对比度为 4.2:1。

让我们来解决这个问题。

让我们来解决这个问题。

网页上检测到许多色彩对比度问题。正如您在颜色和对比度模块中所学,常规大小的文字(小于 18pt / 24px)的颜色对比度要求为 4.5:1,而大号文字(至少 18pt / 24px 或 14pt / 18.5px 粗体)和基本图标必须满足 3:1 的要求。

对于页面标题,由于它是 24 像素的大号文本,因此蓝绿色文本需要满足 3:1 的颜色对比度要求。不过,蓝绿色按钮被视为 16 像素粗体常规大小的文字,因此必须满足 4.5:1 的颜色对比度要求。

在这种情况下,我们可以找到足够深的蓝绿色来满足 4.5:1 的要求,也可以将按钮文本的大小增加到 18.5px 粗体,并稍微更改蓝绿色值。这两种方法都符合设计美学。

除了页面上最大的两个标题外,白色背景上的所有灰色文本也未达到色彩对比度要求。此文本必须加深,才能满足 4.5:1 的颜色对比度要求。

#008576,背景保持为 #ffffff。更新后的颜色对比度为 4.5:1。点击图片即可查看完整尺寸。

#767676,背景保持为 #ffffff。颜色对比度为 4.5:1。

问题 7:列表结构

列表项 (<li>) 未包含在 <ul> 或 <ol> 父元素中。

屏幕阅读器要求列表项 (<li>) 必须包含在父 <ul> 或 <ol> 中,这样才能正确读出它们。

<div class="ul">

<li><a href="#">About</a></li>

<li><a href="#">Community</a></li>

<li><a href="#">Donate</a></li>

<li><a href="#">Q&A</a></li>

<li><a href="#">Subscribe</a></li>

</div>

让我们来解决这个问题。

让我们来解决这个问题。

在此演示中,我们使用 CSS 类来模拟无序列表,而不是使用 <ul> 标记。由于我们不当地编写了此代码,因此移除了此标记中内置的固有语义 HTML 功能。通过将该类替换为实际的 <ul> 标记并修改相关 CSS,我们解决了这个问题。

<ul>

<li><a href="#">About</a></li>

<li><a href="#">Community</a></li>

<li><a href="#">Donate</a></li>

<li><a href="#">Q&A</a></li>

<li><a href="#">Subscribe</a></li>

</ul>

问题 8:tabindex

一些元素的 tabindex 值大于 0。值大于 0 意味着明确的导航顺序。尽管这在技术上可行,但往往会让依赖辅助技术的用户感到沮丧。详细了解 tabindex 规则。

<button type="submit" tabindex="1">Subscribe</button>

让我们来解决这个问题。

让我们来解决这个问题。

除非有特定原因需要干扰网页上的自然标签顺序,否则无需为 tabindex 属性设置正整数。为了保持自然的 Tab 顺序,我们可以将 tabindex 更改为 0,也可以完全移除该属性。

<button type="submit">Subscribe</button>

第 6 步

现在,您已修正所有自动化无障碍功能问题,请打开新的调试模式页面。再次运行 Lighthouse 无障碍功能审核。您的得分应该比第一次运行时高得多。

我们已将所有这些自动化无障碍功能更新应用于新的 CodePen。

下一步

太棒了!您已经完成了很多工作,但我们还没有完成! 接下来,我们将介绍手动检查,如手动无障碍功能测试模块中所详述。

检查您的理解情况

测试您对自动化无障碍功能测试的知识掌握情况。

您应进行哪些类型的测试来确保您的网站可供访问?

自动化测试会捕获哪些错误?